Singapore Harbor and the Failure of Geospatial Marketing

If the global economy were collapsing, how would we know?

In mid-February as parts of Asia wrestled with COVID-19, Twitter user @IAMIRONMAN7 had some data to share and he wasn’t shy about connecting the dots:

As I doom-scrolled through the responses, folks who were familiar with Singapore chimed in that this looked like business-as-usual to them, with anecdata reaching High Art in this flex by a Ben Eliott-Yates:

But what troubled me as a geospatial professional is not one of the tweeters in the thread thought to say “there’s probably data that could answer this question.”

And that’s our failure–the failure of the geospatial industry’s marketing message to resonate even faintly with a crowd willing to debate on social media whether Singapore Harbor was crowded or not. Because our industry’s default setting is to sell rasters and vectors and point clouds and the complicated tools that are supposed to make sense of it all.

Answers? That’s the customer’s problem.

Bill Emison recounted how too many LiDAR projects concluded with handing the client a hard drive with a few terabytes of LAS files along with the Net-30 invoice. For most of us the project is over when we zip our Geotiffs or shapefiles, maybe with some metadata, and email the client a download link.

Will Cadell put it more succinctly at last week’s virtual FOSS4G-UK gathering:

Geospatial people excel at building geospatial things for other geospatial people.

While no one ever starved selling pixels to the NGA, the complacent delivery of the same-old/same-old to the legacy set of tried-and-true set of customers (often, a government entity) has limited our market reach as an industry and left the “vision thing” to others. You can point to new-ish players like Descartes Labs and Orbital Insight who get this and are building businesses around “answers” and not raw data: I say good on them and that they’re the exceptions that prove the rule.

* * * * * *

So, was Singapore Harbor unusually crowded in mid-February?

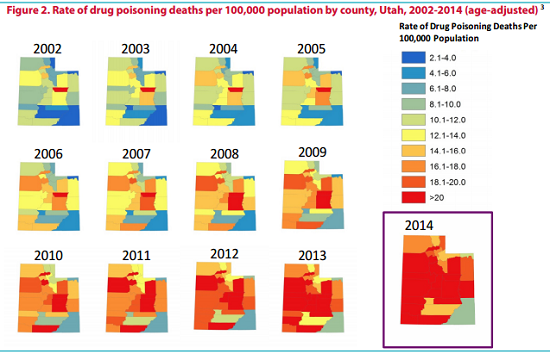

As I wondered about that myself, I came across this web map cooked up by Kristof Van Tricht based on Google Earth Engine which houses a bunch of different datasets including the Sentinel SAR stuff that I knew could answer my question. Let’s go to the animated GIF:

Maybe it’s the hindsight talking, but to this untrained eye the collapse of the global economy doesn’t seem obvious.

The geospatial industry never tires of trumpeting the ubiquity and centrality of Location. But until we learn how to deliver meaning and not just raw data pushed through a pipe, the potential of the new markets we’ve been telling each other about for years will remain unrealized, likely to be seized by others.

— Brian Timoney